At MIT, I spent my PhD working on a deceptively simple question: how do you teach a computer to understand the orientation of objects in 3D space?

The problem matters more than it sounds. When you look at a coffee mug sitting on a table, you instantly know which way the handle faces, whether it's upright or on its side, and exactly how you'd reach out to grab it. For a robot, this is extraordinarily difficult. A camera gives you pixels. Turning those pixels into a precise understanding of how an object is positioned and rotated — well enough to actually pick it up — requires serious mathematics.

The tool I developed to solve this was called the Quaternion Bingham distribution, a way of representing uncertainty about orientation in 3D space. Quaternions are a mathematical system for describing rotations, and the Bingham distribution captures the probability of different orientations — essentially letting the robot say "I'm 90% sure this part is facing this way."

I first applied this math to an unexpected problem: teaching a robot to play ping pong. To return a shot, a robot needs to track not just where the ball is going but how it's spinning — topspin, backspin, sidespin — because the spin determines what the ball will do when it bounces. The Quaternion Bingham distribution gave the robot a way to estimate the ball's spin in real time, fast enough to plan a return.

The ping pong work was fun, and it attracted attention. But the same mathematics turned out to solve a much more consequential problem. In manufacturing, one of the most common and tedious tasks is picking parts out of a bin. The parts arrive jumbled together in random orientations, and a worker — or a robot — has to figure out exactly how each one is sitting before it can be picked up and placed correctly. This is the "bin picking" problem, and it had resisted automation for decades precisely because of the orientation challenge. The same math that tracked spin on a ping pong ball could look at a cluttered bin of metal parts and determine the exact position and orientation of each one.

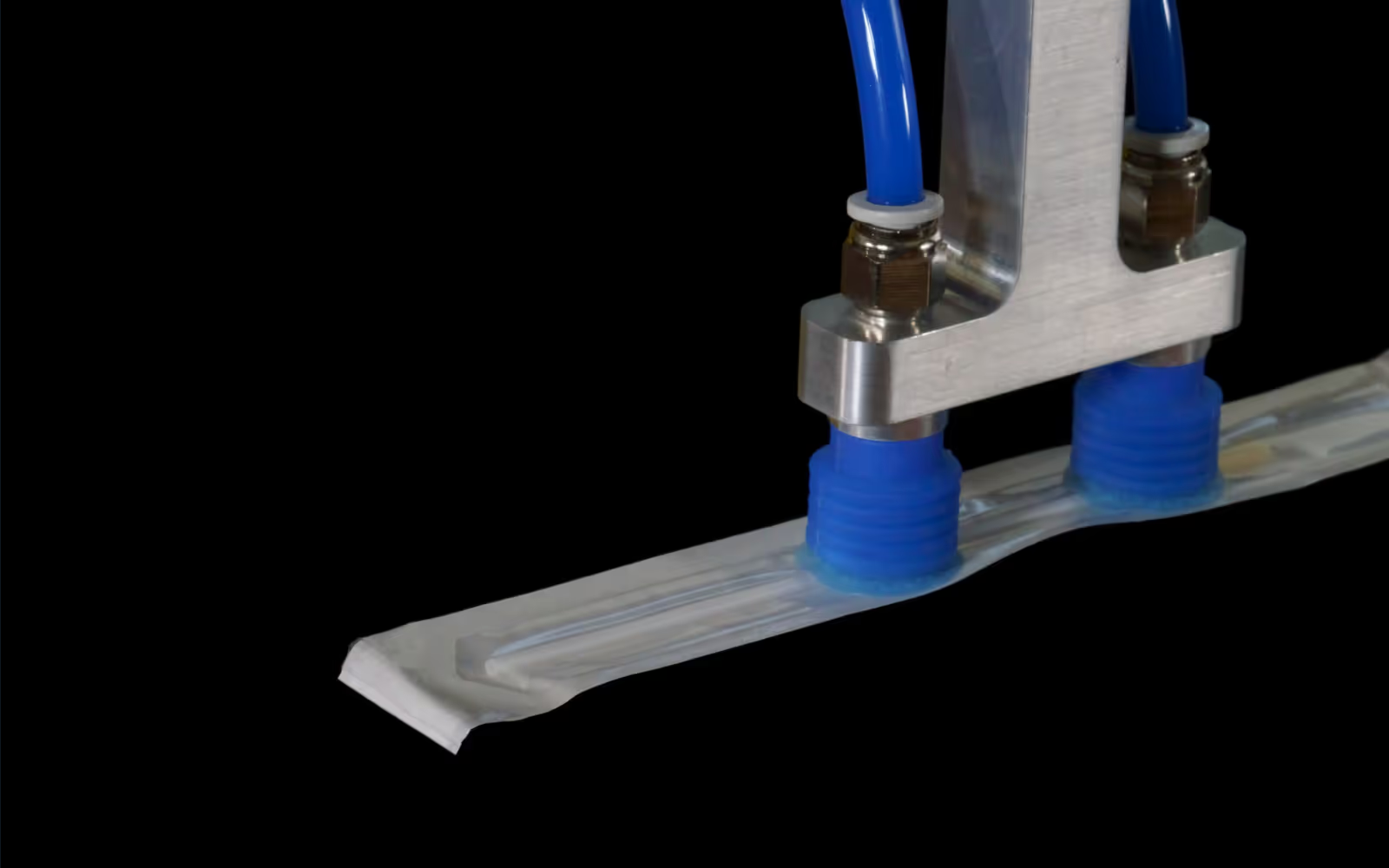

CapSen is short for Capio Sensus- Latin for "to grasp through perception." The name captures what the company does in two words. Our software gives robots the ability to perceive their environment in 3D and use that perception to grasp and place objects with precision. The double meaning of "grasp" is intentional — the robot physically grasps parts, and it does so because the software grasps the geometry of the scene.

We founded the company in 2014, and from the beginning it was built on two complementary foundations. The first was the computer vision and spatial intelligence research from my PhD. The second foundation was reliability; and for that, I owe my co-founder, Mark Schnepf.

Mark and I first worked together at Two Sigma, the quantitative trading firm, where he was my manager. Mark had spent his career leading mission-critical software teams across a range of industries — General Motors, Nasdaq, Motorola, and Two Sigma. In each of these roles, the common thread was that the software could not fail. When your code is executing trades in real time or running a production line, "mostly works" is not an acceptable standard.

I learned this firsthand. At Two Sigma, if our trading team in Singapore had any issues with our code, we got paged — at whatever hour the Singapore market happened to be open, which was often the middle of the night in New York. You learn very quickly what reliable software looks like when your phone buzzes at 3 AM and someone on the other side of the world needs your code to work right now.

Mark brought that discipline to CapSen. From day one, our software was designed to be production-grade — the kind that runs 24/7 on a factory floor without someone babysitting it. This might sound like a basic requirement, but in the robotics world, it's surprisingly rare. Many robotics companies start with impressive demos that fall apart under real production conditions. We started with the assumption that if our software isn't reliable enough to run an overnight shift with no one watching, it isn't ready.

The distance between a working algorithm and a working product is longer than most people realize. My thesis results were state-of-the-art, and I came into the company confident they would translate quickly to industrial applications. That confidence was premature. The real world is messier than a research lab — the lighting is inconsistent, the parts are scratched and oily, the bins are dented, and the robot has to perform the same task thousands of times a day without getting stuck.

Closing that gap took years of work with real customers on real production lines. Every deployment taught us something the lab never could. The algorithms evolved from their academic origins into something hardened by ten years of factory conditions. Today, our spatial intelligence software is used by manufacturers who need their robots to see, understand, and handle objects in cluttered, unstructured environments — the kind of work that is easy for a human hand and eye but has been stubbornly difficult for machines.

That's what CapSen does. We give robots the ability to perceive and understand the physical world, so they can do useful work in it. The math started with ping pong. The mission started with a belief that robots should be able to see. Twelve years later, we're still making that belief real, one factory at a time.